Unmasking Dark Patterns

Dark patterns are intentional design patterns used to deceive users and make them perform actions against their will.

Deception and lies are deeply ingrained in our beloved capitalist system. User experience design couldn’t stay on the sidelines and has assimilated some existing deceptive practices while inventing new ones.

The neologism “dark pattern” was coined by designer Harry Brignull a few years ago when he registered the domain darkpatterns.org to define and categorize these design patterns.

The most common Dark Patterns are:

Confirmshaming

When you have to activate an option or sign up for a service, otherwise, you are made to feel guilty, ashamed, foolish, or like a bad person.

Confirmshaming is the most embarrassing of all dark patterns. When you decline an offer, you are forced to click on a button that says, “No, I enjoy paying full price” or similar garbage.

Cosmopolitan wants us to feel “boring” if we don’t sign up for them.

Bait and Switch

Bait and Switch: These are cases where the user intends to perform one action but ends up doing a completely different one, which is what the “deceptive” website wants.

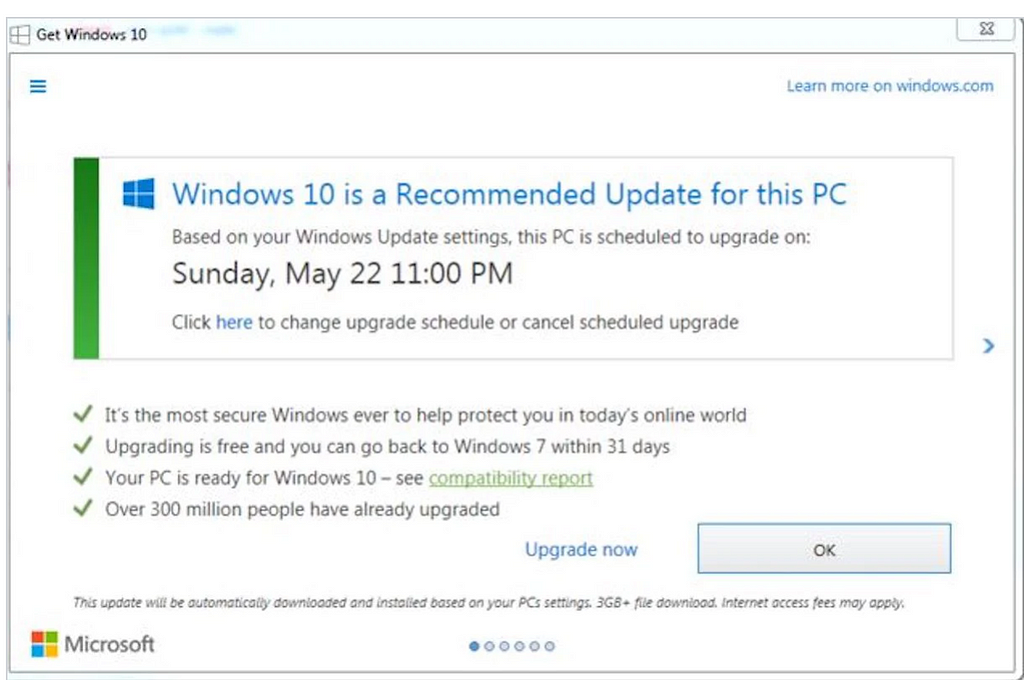

Perhaps the most famous example of bait and switch is Microsoft’s misguided approach to getting people to upgrade their Windows OS to Windows 10.

In the Windows 10 dialog, clicking on the X resulted in the upgrade being initialized — a completely unforeseen result for the majority of users.

Disguised Ads

Advertisements are “disguised” as regular content, video players, or navigation elements to confuse you and make you click on them unknowingly.

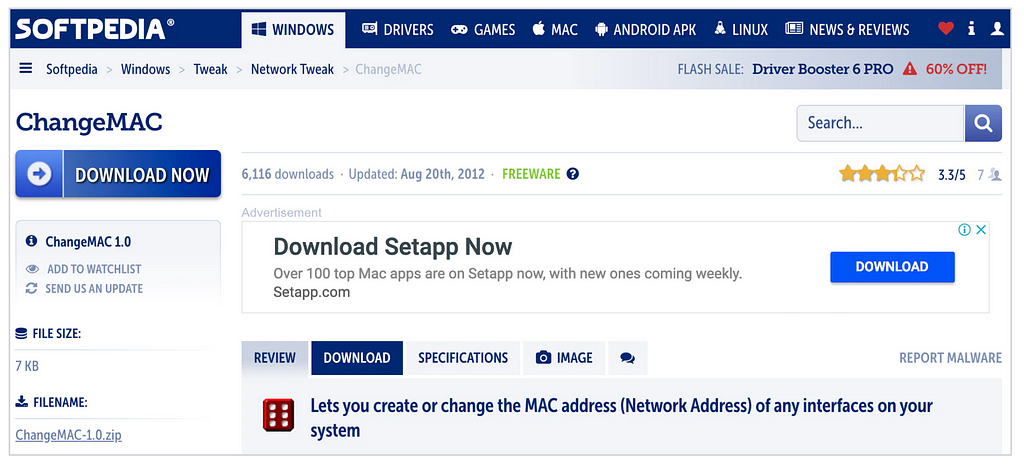

On Softpedia, a popular software download site, ads that mimic download buttons are commonly found. These deceptive buttons use a similar font and dark blue color as the actual download buttons, often leading users to click on them unintentionally.

Forced Disclosure

In a Forced Disclosure, the user is manipulated into divulging personal information for a free or discounted offer that does not require such details for the actual transaction. The data can then be sold to third parties for advertising purposes.

Friend Spam

They ask for permission to access our email contacts, social network contacts, or our phone for a specific action, such as finding friends, and then they do whatever they want with those permissions, like sending spam to all your friends.

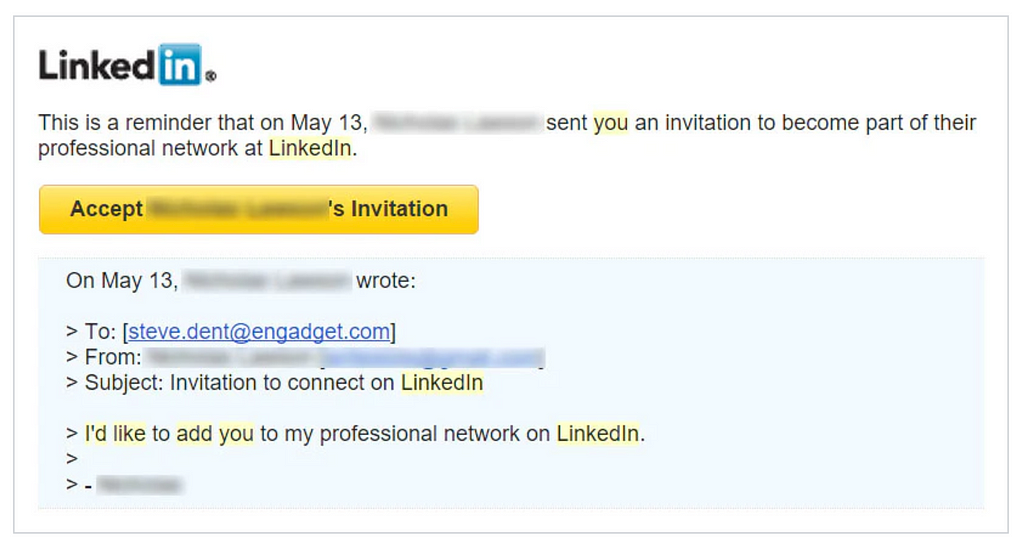

The most notable episode related to this dark pattern was when LinkedIn was fined $13 million for carrying out this practice some years ago.

Here’s the story: Back in early 2010, LinkedIn introduced a new feature called “Add to network” on its website. The intention was to make it easier for users to connect with people they knew by sharing their contact information with LinkedIn. That sounds like a good idea, right?

Well, it didn’t go as planned. Instead of a simple email saying, “Let’s connect on LinkedIn,” users’ contacts received multiple follow-up emails, giving the impression that the messages came directly from the users themselves. This approach didn’t sit well with users, leading to a class action lawsuit against LinkedIn, which they eventually lost.

Hidden Costs

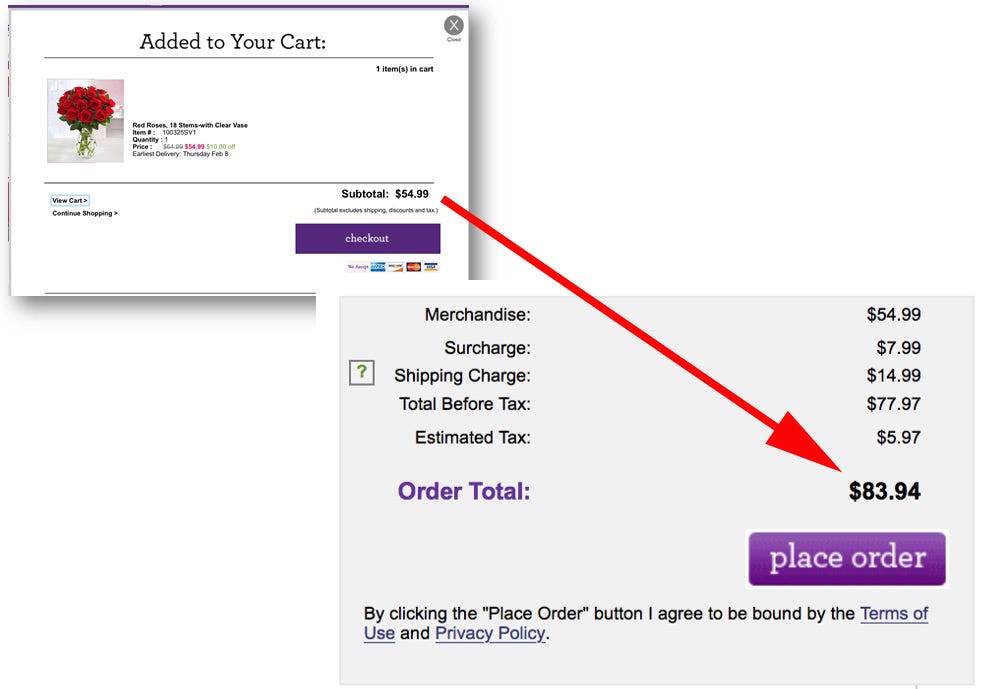

This is usually applied when you are in the last step of the purchase process, and suddenly extra costs appear that you were not aware of, such as delivery charges or taxes. The difference from Sneak into Basket is that they don’t appear until the last step of the purchase process.

The subtotal for flowers on the 1–800-Flowers’ website was $54.99 when the item was added to the cart. The second image shows that the user would actually end up paying $83.94.

Intentional Misdirection

As the name suggests, it distracts the user from what really matters so that they don’t see something and continue with the process.

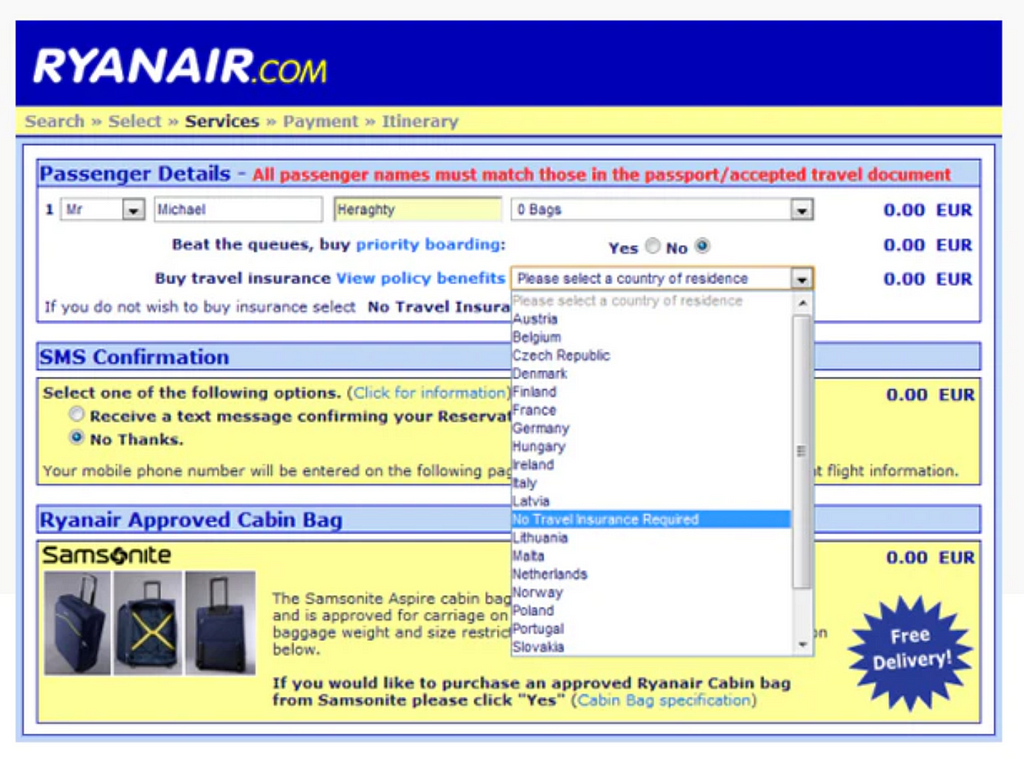

Ryanair’s design is infamous for its intentional misdirection tactics. When booking a flight, users are prompted to select their country of residence — a seemingly mandatory question. Naturally, most users choose their actual country of residence.

However, here’s the catch: the question is actually related to buying travel insurance. Hidden among the list of countries is the option ‘No travel insurance required,’ sandwiched between Latvia and Lithuania. Unaware of this trick, users end up unintentionally purchasing unnecessary travel insurance.

This deliberate scheme is a prime example of a money-grabbing strategy that wipes out user trust.

Privacy Zuckering

Privacy Zuckering is a term coined by the Electronic Frontier Foundation(EFF) to criticize the privacy settings of Facebook, which were confusing for users, and it was named after its CEO, Mark Zuckerberg.

“Needed: A new word that means ‘deliberately confusing jargon and user-interfaces which trick users into sharing more info than they want to’”

This happens because very often, when using an online service, the fine print hidden in the terms and conditions grants the website permission to sell your personal data to other companies.

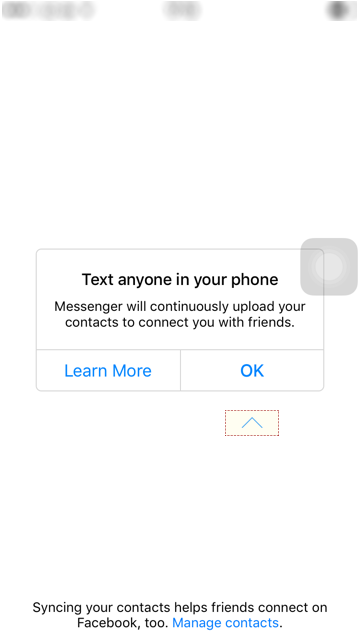

For example the Facebook Messenger app — Upon installation on your phone (as a separate app, mind you!), it courteously requests your permission to access your contacts. This information is then used to segregate your interests, hobbies, and groups, enabling them to target you and your friends with ads.

In the end:

The use of dark patterns can be lucrative and beneficial in the short term, but in the long run, it almost always has a negative impact on the branding of any organization.

The alternative to these dark patterns would be to create good persuasive designs that convince users positively to carry out desired actions.

In 2020, the European Commission announced in its Consumer Agenda its commitment to improving consumer rights. This includes measures against Dark Patterns. In 2022, the European Data Protection Board published guidelines for the detection and avoidance of Dark Patterns.

For me — in a world where technology seems to take over, my focus is on a design that users can safely and ethically interact with.

Originally published at valerian-kleinschnitz.de on June 29, 2023.

Also Read: A great short story on why you should invest in yourself.

- Invest in Yourself

- Surfreisen: The vagrant Surfer – the Surf & Travel Blog

- smart Travel by the vagrant Surfer

Dark Patterns: The Dark Side of UX was originally published in UX Planet on Medium, where people are continuing the conversation by highlighting and responding to this story.